Postings on science, wine, and the mind, among other things.

The Dynamic Origins

of Emotion Concepts

Pop summaries of three papers

When someone tells you that they feel lonely, or thrilled, or nervous, these emotion words likely conjure in your mind a rich understanding of what is happening in their mind. This understanding could help you interpret their past behavior, predict what they are going to do next, or plan how you should interact with them. But where does this abstract, conceptual understanding of emotions come from, and how do people acquire it?

This blog post summarizes the key findings from three of my recent research papers that help to answer this question. These papers approach the question from different directions. The first paper examines how adults in the general population, as well as artificial neural networks, could learn emotion concepts. The second paper examines the acquisition of emotion concepts over the course of child development. The third paper examines how different people acquire different emotion concepts. Together these papers converge on a clear answer to their shared research question: people learn emotion concepts from emotion dynamics – specifically, from patterns in how people transition between different emotions.

Emotions concepts in adults

Thornton, M. A., Rmus, M., Vyas, A. D., & Tamir, D. I. (2023). Transition dynamics shape mental state concepts. Journal of Experimental Psychology: General.

[PDF]

[Preprint]

[Data & code]

Studying how adults learn emotion concepts is challenging, because most people have already acquired rich emotion concepts by the time they reach adulthood. Although we can try to manipulate these concepts, it would be an uphill battle: how likely is it that a 20-minute-long web experiment could measurably change your concept of happiness?

Instead of fighting this losing battle against people’s ingrained emotion concepts, we instead chose to transport our participants to a new world, in which they would have to learn new emotion concepts. Specifically, we conducted a series of nine experiments in which participants played the role of xenopsychologists on a mission to explore an alien world. Participants were introduced to the locals, and then observed their unfold emotions over time. After this learning period, participants rated the alien’s emotions, telling us which emotions they thought were more conceptually similar to each other, and which they thought were more different.

Example stimulus from one of the xenopsychology experiments

Behind the scenes, we experimentally manipulated the alien’s emotion dynamics, such that certain emotions were more likely to precede or follow others. The likelihood that a given emotion follows another is known as a transition probability. In previous research, we found that the transition probabilities between emotions that people experience in the real world are highly correlated with other people’s judgements of the conceptual similarity between those emotions. The more likely a transition between a pair of emotions, the more conceptually similar people judge them to be. This result hinted that transition dynamics might be involved in shaping the concepts, but these correlational data could not determine whether this effect was causal. Our xenopsychology experiments gave us experimental control that studying real emotions did not.

Despite varying many aspects of the task and the alien’s emotion dynamics, all nine of the experiments that we conducted supported the hypothesis that the transition probabilities between emotions causally shaped how our participants conceptualized the alien’s emotions. There’s a lot more detail in the paper, including other measures of conceptual content, computational modeling of exactly how participants translate dynamics into concepts, and examinations of the complementary roles of dynamics and static features of emotions in shaping concepts. But the general message from these studies was clear: transition dynamics shape emotion concepts.

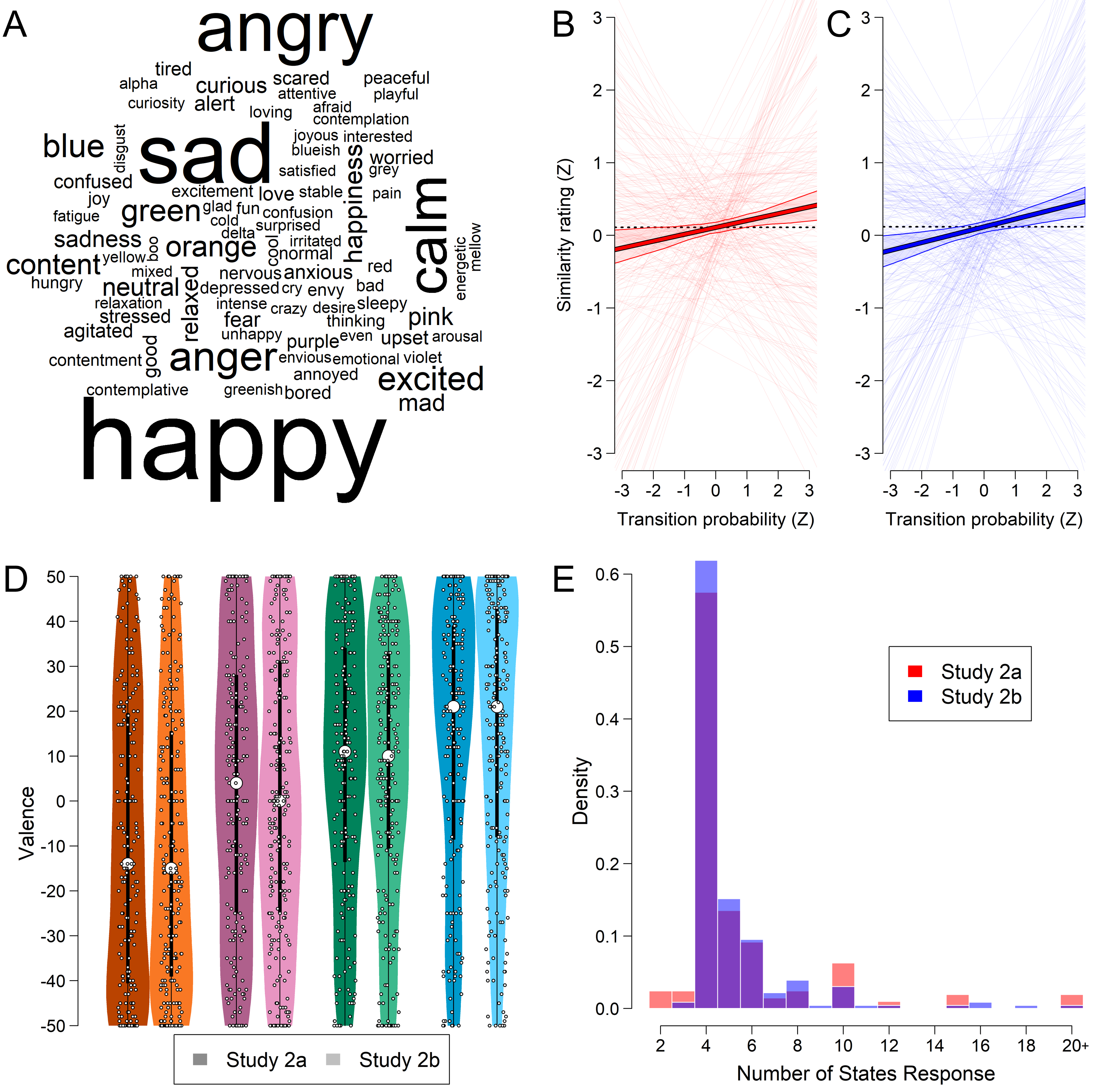

A) The word cloud visualizes the most common free-response labels given to the alien’s mental states, with more frequent words appearing in larger text. Higher transition probabilities predicted higher transition ratings in both Study 2a (B) and Study 2b (C). Thin lines represent participant level regressions between these variables, controlling for visual color similarity. The thick lines represent the mean slope, surrounded by a 95% bootstrap confidence interval. D) Colors reliably predicted valence ratings in Studies 2a and 2b. The distribution of valence is shown via violin plots, with raw ratings ranging from the most positive (+50) to most negative (-50) possible values. The large white circles indicate the median for each color. E) In both studies, outright majorities of participants correctly indicated that the number of states the alien had matched the number of attractors in the state space (four).

Emotion concepts in artificial neural networks

Thornton, M. A., Rmus, M., Vyas, A. D., & Tamir, D. I. (2023). Transition dynamics shape mental state concepts. Journal of Experimental Psychology: General.

[PDF]

[Preprint]

[Data & code]

The experiments with adult participants described above provide strong evidence that people could learn emotion concepts from emotion dynamics, but why would they do this? Participants are real people, and like all real people, they have a wide variety of different goals that they pursue. As much as we might sometimes like, participants do not simply forget these real-world goals the moment they walk into our labs, or open our experiment tab in their web browser. We naturally give participants a new set of goals in our experiments, but we cannot assume that they pursue those goals we set monomaniacally, to the exclusion of everything else they might value.

The multiplicity of potential goals that participants might be pursuing makes it hard to know how to interpret their behavior: are they doing something because we asked them to? Because they think it’s what we expect? Because they’re bored and just want to get through the study? In the present case, we hypothesized that the goal of predicting others’ future states was sufficient to account for why participants formed the emotion concepts they did. But we wanted stronger evidence to support this conclusion.

We therefore turned to a very different type of participant: artificial neural networks (ANNs). ANNs, in the form of deep learning, have become extremely hot topics as of late. Large language models such as GPT have achieved remarkable new standards in producing human-like language, and image generation models like stable diffusion portend major disruption to the world of visual art. The ANNs we used in this study are at the other end of the scale of complexity from these famous models: they are close to the simplest ANNs one could possibly program.

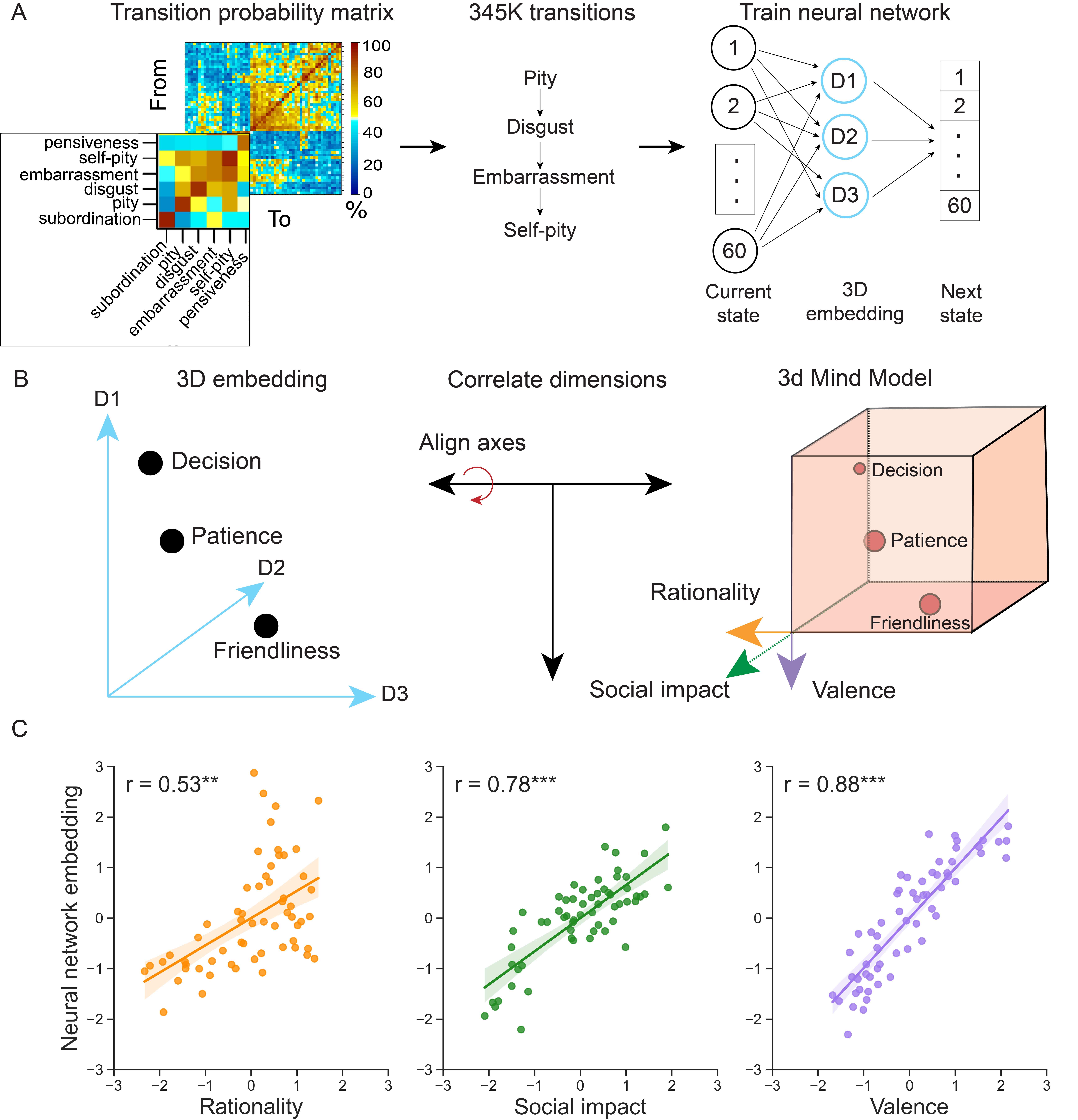

We trained three ANNs in parallel “experiments” to see if they would learn human-like emotion concepts from human emotion dynamics, given the right goal. Each of these networks took in as its input a sequence of emotions like “happy, happy, tired, sad…”. The network learned to convert these discrete labels into a continuous set of numbers – 1, 2 or 3 numbers, depending on the experiment. It then used these numbers to try to predict the next state in the sequence, based only on knowing the current state.

What we found was that the networks learned psychological dimensions that describe human emotion concepts. When the model was 1d, it learned the dimension of valence, or positive vs. negative. When the model was 2d, it learned valence plus arousal: how intense the emotion was. Finally, when the 3d, it learned the dimensions of the 3d Mind Model: valence, social impact (how much an emotion is likely to affect others), and rationality (how cognitive vs. emotional a mental state is). The fact that the models learned these dimensions spontaneously, given only the goal of predicting future emotions from current emotions, provides compelling evidence that the goal of predicting human emotion dynamics is sufficient to account for the conceptual structure people apply to them.

A) Transition probabilities between 60 human mental states were used to generate a sequence of mental states with naturalistic dynamics. This sequence was used to train neural networks in Studies 3a and 3b with a singular goal: to predict the next state from the current state. B) The resulting dimensions learned by the networks were aligned with conceptual structures from the literature, including the 3d including the 3d Mind Model in Study 3a, via cross-validated Procrustes transformations. The aligned dimensions were then correlated with one another to test whether the network had learned a human-like representational space. C) The networks spontaneously learned 1d, 2d, and 3d conceptual spaces. The 3d network in Study 3a recover the conceptual dimensions of rationality, social impact, and valence from the transition dynamics. These results indicate that mental state dynamics – and the goal of predicting them – suffice to explain the structure of mental state concepts.

Emotion concepts in children

Nencheva, M. L., Nook, E. C., Thornton, M. A., Lew-Williams, C., & Tamir, D.I. (2023). The co-emergence of emotion vocabulary and organized emotion dynamics in childhood. PsyArXiv.

[Preprint]

[Data & code]

Having accumulated evidence from adults and ANNs that emotion dynamics shape emotion concepts, we wanted to examine whether we could actually see this process playing out in real life over the course of child development. If you know me or my long-time collaborator Diana Tamir – with whom I co-authored all of the papers described in this post - then you know that we’re not developmental psychologists. As a result, we needed more collaborators to answer this question effectively. We found these collaborators in my (then) colleagues at Princeton: Professors Casey Lew-Williams and Erik Nook, and graduate student Mira Nencheva, who led this project.

Mira’s studies focused on children’s emotional development from birth to age seven. Mira surveyed these children’s parents, asking them to report on their child’s emotion transitions. In some studies, this consisted of direct ratings of how often they though their child transitioned from one emotion to another. In another study, we used experience sampling to indirectly measure emotion transitions: participants repeatedly reported on the children’s emotions, allowing us to calculate emotion transitions from one report to the next. We also measured how long children’s emotions lasted, and the children’s emotional vocabulary.

What we found was that older children experienced longer emotions, with more systematic (i.e., less random) transitions between different emotions. This increase in systematicity was supported by an increasing role for valence in shaping these emotion transitions. Compared to younger children, older children were more likely to transition within-valence (e.g., going from one negative emotion to another, rather than from a negative to a positive). The valence organization of children’s emotion transitions was correlated with larger emotional vocabulary, even after statistically controlling for age. These finding hint that the increasing regularity of children’s emotion dynamics might support their acquisition of emotion concepts. Together with the more direct causal evidence from the experiments with adults, Mira’s work provides convergent evidence for the importance of emotion dynamics in shaping emotion concepts.

Individual differences in emotion concepts

Barrick, E., Thornton, M. A., Zhao, Z., & Tamir, D. I. (2023). Individual differences in emotion prediction and implications for social success. PsyArXiv.

[Preprint]

[Data & code]

The last paper I will discuss here examines emotion concepts from yet another angle: individual differences. In this case, individual differences refer to the fact that not everyone conceptualizes emotions in the same way. For example, one person might find “calmness” to be very positive, whereas another might find it a bit boring and therefore less positive. How do people come to understand emotions differently, despite being embedded in the same culture?

In this study, lead by Elyssa Barrick, we hypothesized that people draw on two broad sources of information to understand emotions: internal information about their own emotions, and external information gleaned from observing others’ emotions. If this is true, then it follows that two people with different internal or external experiences with emotion will wind up with different emotion concepts as well.

We tested this examining how three variables correlated with people’s ability to predict others emotions: typicality, alexithymia, and emotion perception. Frist, typicality refers to how typical – or similar to average – an individual’s own emotion dynamics were, relative to the general population. We hypothesized that people with less typical emotion dynamics would learn a emotion concepts that differ from others’, and therefore be less accurate at predicting others’ emotions. Second, alexithymia refers to difficulty identifying one’s own emotions. If a person cannot label what they are feeling, they are unlikely to be able to use their own experiences to learn about emotion concepts in the abstract. Being cut off from this vital source of information is also likely to make people less accurate at predicting others’ emotions. Finally, emotion perception refers to the ability to recognize others’ emotions – for instance, from their facial expressions. If one cannot identify others’ emotions, then one will likewise be cut off from information that is important for forming emotion concepts.

Across five studies, our results support these hypotheses: lower typicality, greater alexithymia, and lower emotion perception ability were all associated with less accurate emotion predictions. Moreover, these associations statistically mediated the relationship between emotion prediction accuracy and clinically relevant functional impairments, such as communication difficulties experienced by people with autism. These results lend further convergent evidence to the conclusion that learning emotion concepts relies, at least in part, on the ability to observe one’s own, and others’, emotion dynamics.

Looking ahead

Putting the three studies described here together provides compelling evidence that emotion dynamics shape emotion concepts. However, we still know relatively little about how exactly the brain achieves this learning. What algorithms allow the brain to translate observed dynamics into concepts? The first paper above contains some initial computational modeling of this process, but much work remains to be done in this space. Ongoing research in my lab is examining this question in two different projects.

First, we are currently part way through a set of studies in which we test whether longer sequences of emotions – rather than just transition probabilities from one emotion to the next – influence the formation of emotion concepts. Someone’s next emotion probably depends not only on the emotion they are currently experiencing, but also some of other emotions they have recently experienced – a property known as hysteresis or history-dependence. We are examining people’s intuitions about emotion hysteresis, how this hysteresis is encoded in the brain, and how it influences people’s explicit judgements about emotion concepts.

Second, we are building more complex computational models of emotion understanding using larger, more sophisticated ANNs. In this line of work, we break emotion understanding down into three related but distinct parts: emotion perception, emotion prediction, and (social) emotion regulation. That is, how people infer others’ emotions from observable cues, how people predict others’ future emotions, and how people intervene to change others’ emotions. We anticipate that all three of these components will influence how people learn to conceptualize emotions. The use of ANN models will allow us to make precise quantitative predictions about how those influences on emotion concepts might be reflected in the brain.

Thank you for reading and keeping up with our work on this exciting topic! We look forward to having more to share in the months and years to come.

© 2023 Mark Allen Thornton. All rights reserved.